TL;DR

- Solo founder shipped 40+ features using three specialized Claude Code agents as PM, Designer, and Engineer

- Development time dropped from 6-8 hours to 2-3 hours per feature ($15-40 in tokens)

- Key tool: Shared markdown “hub file” for agent coordination and handoffs

- Best for: Solo founders and small teams who need to wear multiple hats

- Key lesson: Specialized agents with narrow focus hallucinate less than generalist agents

A solo founder discovered that running three specialized Claude Code agents (Product Manager, Designer, Engineer) cut his feature development time by 60% while creating documentation he never had before.

George had been a solo founder for eighteen months.

Every feature he shipped required him to wear three hats: product manager, designer, and engineer. He’d write the requirements. Mock up the interface. Build the code. Review his own work.

“I was burning out playing three roles badly instead of one role well.”

Then he discovered multi-agent orchestration — and built himself a team.

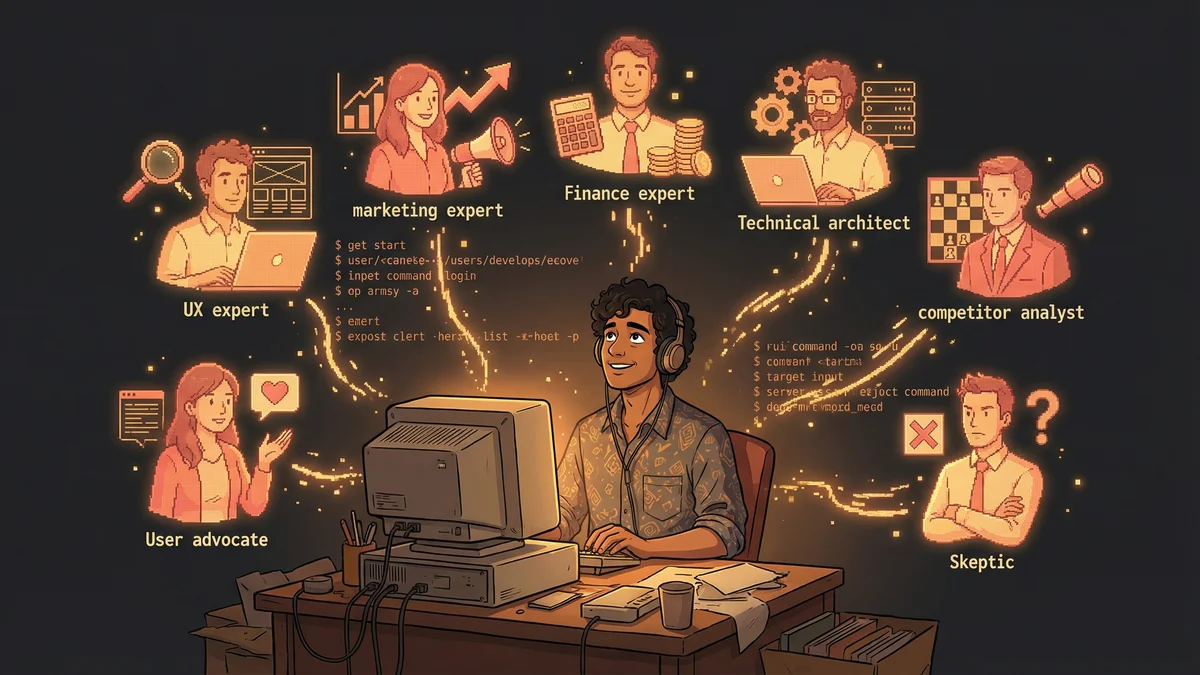

The Three Roles

George set up three separate Claude Code instances, each with a distinct persona:

The Product Manager Agent: “You are a senior product manager. Your job is to take vague ideas and turn them into comprehensive Product Requirements Documents. Be rigorous. Ask clarifying questions. Define edge cases. Specify acceptance criteria.”

The UX Designer Agent: “You are a UX designer focused on simple, functional interfaces. You receive PRDs and translate them into concrete design specifications. Create component hierarchies. Define interaction patterns. Output design systems, not mockups.”

The Engineering Lead Agent: “You are a senior software engineer. You receive requirements and designs, then implement clean, tested code. Follow the specs exactly. Don’t improvise on requirements — ask if something is unclear.”

Same underlying AI. Three different operating modes.

The First Experiment

George started with a feature request: “Add a Pomodoro timer to the productivity app.”

Simple enough to test the workflow. Complex enough to require real thought.

Phase 1: The PM Agent

George gave the PM agent a one-sentence brief: “Users want a Pomodoro timer.”

What came back surprised him.

The agent asked clarifying questions:

- “Should users be able to customize work/break durations?”

- “Do timers persist across sessions?”

- “Are there notifications? Sound? Visual?”

- “Does timer usage integrate with existing productivity metrics?”

George answered each question. The PM agent continued refining until it produced a 12-page PRD covering:

- User stories with acceptance criteria

- Edge cases (browser tab closed, system sleep, offline mode)

- Data model requirements

- API endpoint specifications

- Success metrics

“I’d never written a PRD that thorough for a small feature. The PM agent didn’t have the ‘that’s good enough’ instinct that speeds human work and creates gaps.”

Phase 2: The Designer Agent

George fed the PRD to the designer agent.

The designer read the requirements and produced:

- A component hierarchy (Timer → Display → Controls → Settings)

- State definitions (idle, running, paused, break, complete)

- Interaction patterns (click to start, click to pause, long-press to reset)

- Visual specifications (colors, typography, spacing)

No pixel-perfect mockups. But a design system clear enough to implement.

“The designer didn’t waste time on pretty pictures I’d just have to interpret anyway. It gave me a spec I could code from.”

Phase 3: The Engineer Agent

The engineer agent received the PRD and design spec. Then it built.

Backend endpoints for timer CRUD. Frontend components matching the hierarchy. State management following the defined transitions. Tests covering the acceptance criteria.

“The code wasn’t perfect. But it was close. The engineer agent made decisions matching what I would have chosen — because the specs were clear enough to eliminate ambiguity.”

The Orchestration Layer

Running three instances manually worked for proof-of-concept. For production use, George needed automation.

He created a simple orchestration system:

The Hub File (MULTI_AGENT_PLAN.md):

# Feature: Pomodoro Timer

## Current Phase

Engineering (Phase 3 of 3)

## Status

- [x] PM: PRD complete (docs/PRD-pomodoro.md)

- [x] Design: Spec complete (docs/DESIGN-pomodoro.md)

- [ ] Engineering: Implementation in progress

## Handoff Log

- PM → Designer: Ready (2025-01-10 14:32)

- Designer → Engineer: Ready (2025-01-10 16:45)

## Blocked By

None

## Next Steps

1. Engineer completes implementation

2. Integration tests

3. PM reviews against acceptance criteriaEach agent read this file before acting and updated it after completing work. The file became the communication channel.

The Hallucination Reduction

George noticed something unexpected: the agents hallucinated less.

“When I used a single Claude session for everything, it would sometimes invent requirements or forget constraints. With three agents, each specialized, the errors dropped dramatically.”

The theory: cognitive load matters even for AI. A single agent trying to hold product strategy, design decisions, and implementation details simultaneously loses coherence. Specialized agents with narrow focus stay on track.

“The PM agent never tried to write code. The engineer never made product decisions. Separation of concerns isn’t just good for software — it’s good for AI workflows.”

The Review Loop

George added a fourth role: The Reviewer.

After the engineer completed work, the reviewer agent compared implementation against the original PRD. It checked:

- Do all acceptance criteria pass?

- Are edge cases handled?

- Does the design spec match the implementation?

Mismatches got documented and fed back to the engineer.

“The reviewer caught things I would have missed in self-review. It compared documents I’d already forgotten against code I’d just read.”

The Team Dynamics

After six months of multi-agent development, George observed patterns:

The PM agent was thorough but slow. It wanted to document everything. George learned to constrain scope: “This PRD should be 3 pages maximum.”

The designer agent needed examples. Without references to existing components, it would create inconsistent patterns. George started including “match the style of our existing components” in every prompt.

The engineer agent was fast but literal. It implemented exactly what specs said — even when specs had gaps. George learned that designer specs needed more detail than he initially provided.

“Managing AI agents taught me about managing human teams. Clear requirements. Consistent standards. Explicit handoffs. The skills transfer.”

The Cost Analysis

George tracked his multi-agent costs over three months:

Feature development using three agents averaged $15-40 per feature, depending on complexity.

“Before agents, a feature like the Pomodoro timer took me 6-8 hours across all three roles. With agents, it took 2-3 hours of my time plus $25 in tokens.”

His effective hourly rate increased dramatically. He shipped more features. He burned out less.

The Scalability Question

Could George have used four agents? Ten?

He experimented with expanding the team:

- Adding a “QA Agent” for test writing

- Adding a “Documentation Agent” for user guides

- Adding a “Security Agent” for vulnerability scanning

More agents meant more orchestration overhead. Beyond 4-5 agents, the coordination cost exceeded the benefit.

“Three to four agents is the sweet spot for solo development. More agents require an orchestration framework I don’t want to build.”

The Human Oversight

George never let agents commit to main without review.

“I’m the integration point. The PM might miss a business constraint only I know. The designer might create patterns inconsistent with brand. The engineer might make performance tradeoffs I’d reject.”

The agents did 80% of the work. George provided 20% of oversight. That ratio worked.

“I’m no longer the person doing the work. I’m the person making sure the work is right.”

The Unexpected Benefit

The multi-agent workflow produced something George hadn’t expected: documentation.

Every feature came with a PRD, design spec, and implementation notes. Previous features George had built solo had minimal docs — he’d known the context, so why write it down?

“Now I have an archive. When I wonder why a feature works a certain way, I read the PRD. When I hire contractors, I give them design specs. The agents created institutional knowledge I never had as a solo founder.”

The Pattern for Others

George’s advice for multi-agent workflows:

- Start with two agents — PM and Engineer. Add Designer only when needed.

- Define handoff artifacts — What document format passes between agents?

- Create a hub file — Something all agents read and update.

- Maintain human review — You’re the orchestrator, not a spectator.

- Track costs — Multi-agent burns tokens faster. Make sure the ROI justifies it.

“Three agents sound complicated. In practice, it’s just three prompts and a text file. The sophistication is in role definition, not technical infrastructure.”

The Current State

Eighteen months later, George still uses the three-amigo pattern.

He’s shipped 40+ features with agent teams. His app has grown from MVP to profitable product. He’s considering hiring humans — but for customer success, not development.

“The agents aren’t perfect employees. They need management. They make mistakes. But they’re available 24/7, they don’t burn out, and they never complain about writing tests.”

The solo founder paradox solved: George runs a team of one — orchestrating a squad of AI agents that work like a team of four.