TL;DR

- Reduced social monitoring from 3 hours daily to 30 minutes with better coverage

- Deployed 4 specialized agents: Reddit Scanner, X Listener, HN Observer, Discord Crawler

- Used parallel Claude Code instances with platform-specific prompts and a summary coordinator

- Best for: B2B founders whose customers discuss problems on Reddit, X, HN, or Discord

- Key lesson: Specialized agents per platform plus synthesis layer enables systematic listening at scale

A founder deployed four AI agents to monitor developer conversations across Reddit, X, Hacker News, and Discord, reducing monitoring time from 3 hours to 30 minutes while improving coverage.

Danny had a monitoring problem.

His product helped developers with AI coding tools. His potential customers talked about their frustrations on Reddit. On X. On Hacker News. On Discord servers.

“The conversations were happening constantly. I couldn’t watch everything. I was missing opportunities — people asking for exactly what I built, and I wasn’t there to answer.”

Hiring someone to monitor social platforms full-time seemed excessive. Manual checking was unreliable. Something had to change.

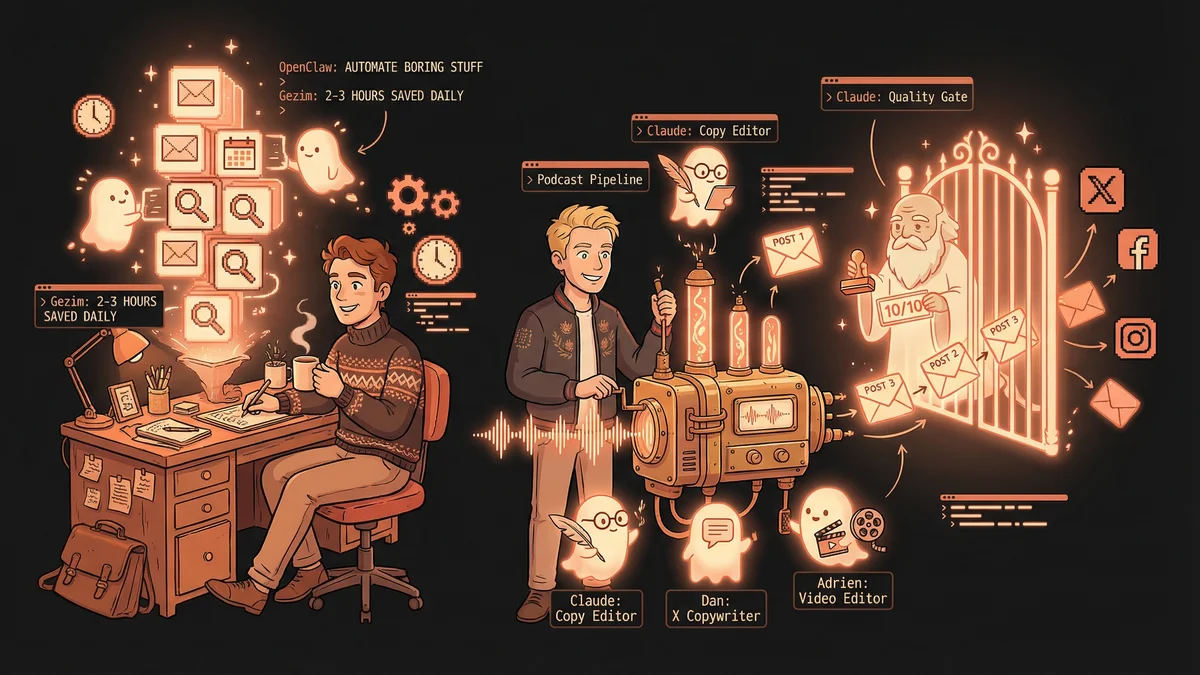

The Multi-Agent Concept

Danny had used Claude Code for single tasks. Then he discovered you could run multiple instances simultaneously.

“Each instance was like a separate worker. What if I had different workers watching different platforms?”

The idea: specialized agents, each focused on one domain, all working in parallel. A swarm instead of a single bee.

The Four Agents

Danny designed four custom sub-agents:

Agent 1 — The Reddit Scanner Monitored programming subreddits for posts about AI coding tools. Looked for frustration signals: “I wish…”, “Why doesn’t…”, “Anyone else hate when…”

Agent 2 — The X Listener Tracked keywords and hashtags related to developer tools. Focused on complaint threads and recommendation requests.

Agent 3 — The HN Observer Watched Hacker News discussions about AI, coding assistants, and developer productivity. These conversations often included detailed technical frustrations.

Agent 4 — The Discord Crawler Monitored public developer Discord servers where practitioners discussed their workflows and tools.

“Each agent had a specialty. Each knew what to look for. Together, they covered more ground than I ever could.”

The Workflow Architecture

The agents ran on a schedule.

Morning: Each agent scans their platform for overnight activity. Throughout day: Periodic checks for new relevant conversations. Evening: Summary report of all findings.

“I’d wake up to a briefing. ‘Here are the five most relevant threads from last night. Here’s what people are complaining about. Here are the opportunities.’”

The agents didn’t just collect — they prioritized. High-relevance conversations surfaced first.

The Response Drafting

Finding conversations was only half the value. Responding effectively was the other half.

“Agent would say: ‘Found a thread where someone asks for exactly your feature. Here’s a draft response that’s helpful without being salesy.’”

Each agent knew Danny’s product, his voice, what counted as authentic engagement versus spam. Draft responses were ready for review and posting.

“I’d check the draft, make any adjustments, post. What could have taken an hour of writing took two minutes of editing.”

The Pattern Recognition

Individual conversations were valuable. Patterns across conversations were strategic.

“After a month, I asked: ‘What are people complaining about most often?’”

The agents had data. They’d tracked hundreds of threads. Patterns emerged:

- Feature X frustration appeared 47 times

- Pricing complaint appeared 31 times

- Integration request appeared 28 times

“I knew exactly what features to prioritize. Not guessing. Data from actual conversations.”

The Competitive Intelligence

Danny’s agents also tracked competitor mentions.

“When someone complained about a competitor, I needed to know. Not to bash them — but to understand what wasn’t working.”

Competitor complaint tracking revealed opportunities:

- Competitor A’s users frustrated with onboarding

- Competitor B’s users wanted better documentation

- Competitor C’s users complained about pricing

“I could position against real weaknesses, not imagined ones.”

The Authenticity Challenge

Social listening raises ethical questions. Danny thought carefully about authenticity.

“I never pretended to be a regular user when I was the founder. I never astroturfed positive reviews. I never brigaded competitors.”

The agents helped him find conversations. He engaged as himself, transparently. Product founder responding to feedback, not fake accounts generating buzz.

“Authentic engagement at scale. Not fake engagement. The distinction matters ethically and practically — people can smell astroturfing.”

The Time Transformation

Before the swarm: Danny spent two to three hours daily monitoring platforms, usually missing most conversations anyway.

After the swarm: Danny spent thirty minutes reviewing agent summaries and engaging with high-value conversations.

“I got time back AND better coverage. Usually you trade one for the other. The agents gave me both.”

The saved time went into product development. Features users actually requested, identified by agent monitoring.

The Coordination Layer

Four agents meant coordination challenges.

Danny created a central summary agent that received outputs from all four, deduplicated findings, and produced a unified daily briefing.

“Sometimes the same conversation appeared on Reddit and X. The summary agent caught duplicates and presented unified findings.”

The hierarchy: four specialized collectors feeding into one synthesizer. Assembly line for social intelligence.

The Prompt Engineering

Each agent required careful prompting.

The Reddit scanner needed to understand subreddit culture — what counted as relevant versus noise. The X listener needed to filter signal from volume — X had many irrelevant mentions. The HN observer needed to recognize substantive technical discussions. The Discord crawler needed to respect community norms.

“I iterated on the prompts for weeks. Too broad captured noise. Too narrow missed opportunities. The sweet spot took experimentation.”

The Scaling Question

Four agents worked. Would forty work better?

Danny explored expansion: agents for specific subreddits, agents for niche communities, agents for particular competitor products.

“More agents meant more coverage but also more synthesis overhead. There’s a limit to useful parallelization.”

He settled on eight agents total: the original four plus four specialized sub-watchers for his most important conversations.

The ROI Assessment

The social listening swarm became core infrastructure.

Customer acquisition improved. Danny engaged early with people who had buying signals. Product development improved. Roadmap reflected actual user needs. Competitive awareness improved. He anticipated competitor moves.

“Hard to measure exactly, but the signal quality of my market intelligence increased dramatically.”

The Lessons for Others

Danny shared his approach with other founders.

“Start with one platform. One agent. See if the pattern works for your market. Then expand.”

Not every market has rich social conversation. Not every product benefits from this monitoring. But for developer tools, B2B software, and anything with an active online community — the swarm approach works.

“The technology is available. The question is whether your market talks online. If they do, you should be listening systematically.”

The Future Vision

Danny saw the pattern expanding.

“Right now, I orchestrate four agents manually. In the future, this will be more automated. Agent networks that monitor, synthesize, and suggest responses with minimal human oversight.”

The current state was powerful but primitive. Four terminal windows, manual coordination, human synthesis. The future would be more sophisticated.

“We’re at the ‘four terminals’ stage of multi-agent systems. The patterns are emerging. The tooling will follow.”