TL;DR

- Team migrated 850K lines Python to 520K lines Go in 14 months (4 months early)

- Stateless AI agents processed 10,000+ files with consistent migration rules

- Cost: $8,400 in Claude API vs estimated $400K for consultants (98% savings)

- Best for: legacy codebase modernization exceeding AI context window limits

- Key insight: fresh Claude instances per file prevent context pollution at scale

A team migrated an 850,000-line legacy monolith to microservices using stateless AI agents, finishing four months early and spending $8,400 instead of the $400,000 consultants would have charged.

Priya inherited a monster.

The codebase was 12 years old. Originally PHP, converted to Python in 2015, patched and extended ever since. 850,000 lines of code. Zero tests. Documentation from 2017.

“New features took months because nobody understood how anything worked. Every change broke something unexpected. We were trapped.”

The mandate from leadership: modernize to a microservices architecture. The deadline: 18 months. The budget: not enough for consultants at $500/hour.

Priya had to find another way.

The Scale Problem

Traditional AI-assisted refactoring hit immediate walls.

“I’d open Claude Code, feed it a file, ask for a migration plan. The response would reference dependencies it couldn’t see. The context window couldn’t hold enough of the codebase for coherent suggestions.”

The monolith was too big for any single context window. Even at 200,000 tokens, Claude could process maybe 5% of the codebase at once.

“I needed a way to work on atomic pieces without losing the big picture.”

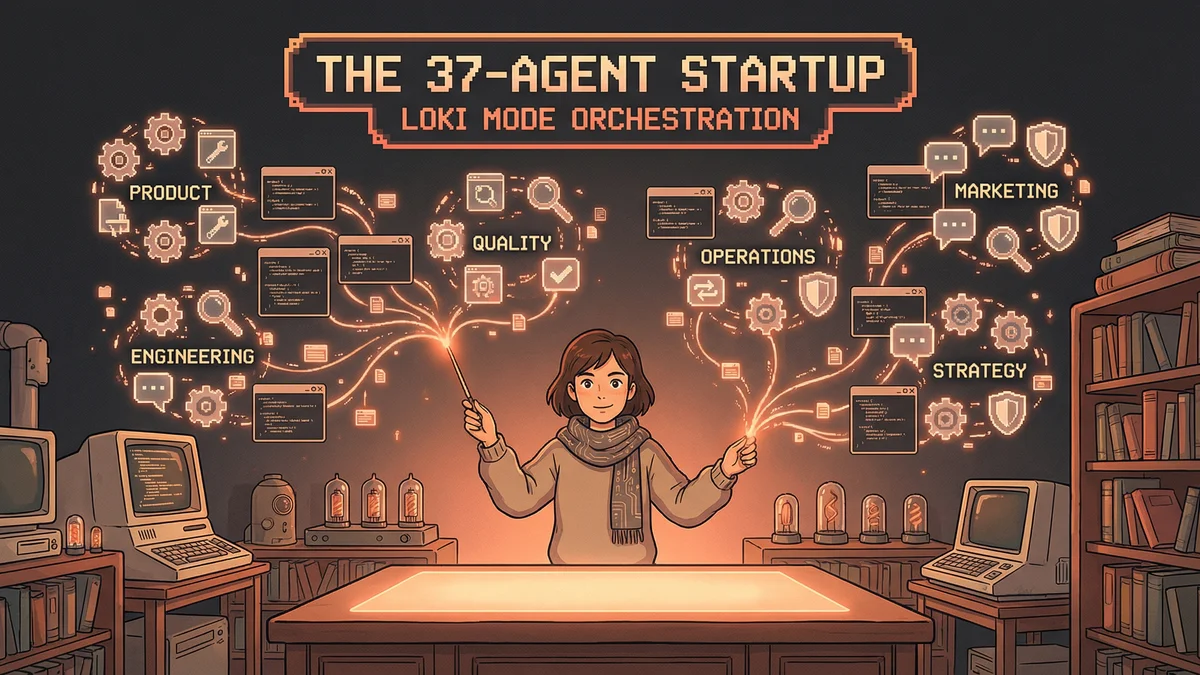

The Map-Reduce Architecture

Priya designed a three-phase system:

Phase 1: Analysis Claude scanned the entire codebase directory by directory, building a dependency graph and module inventory. Not trying to understand everything — just cataloging what existed.

Phase 2: Decomposition Based on the inventory, Claude identified natural service boundaries. Which modules could become independent services? Which had circular dependencies that needed breaking?

Phase 3: Migration Each module migrated independently using “stateless worker” agents — fresh Claude instances that processed one file at a time with only the migration rules loaded.

“The key insight: I didn’t need Claude to understand the whole codebase. I needed Claude to understand the rules and apply them consistently.”

The Stateless Worker Pattern

Traditional Claude Code sessions accumulate context. That’s usually helpful — the AI remembers what you discussed.

For massive migrations, accumulated context became a liability. The session would fill with irrelevant details from earlier files, confusing analysis of later ones.

Priya’s solution: spawn a fresh Claude Code instance for each file.

The Worker Script:

for file in migration_queue:

spawn_fresh_claude(

prompt=MIGRATION_RULES + file.content,

output=file.path.replace('.py', '.go')

)Each worker knew only:

- The migration rules (Python → Go patterns)

- The current file being processed

- The target API contracts for the new services

Nothing else. No accumulated baggage. Peak attention on every file.

The Migration Rules

The rules document became the critical artifact.

It specified:

- Python pattern → Go equivalent

- Naming conventions

- Error handling approach

- How to handle database calls

- How to handle API calls

- What to do with ambiguous cases

“Writing the rules took two weeks. It was the hardest part. But once the rules existed, Claude could apply them to 10,000 files without me reviewing each one.”

Week One: The Pilot

Priya started with a low-risk module: notification services. 47 Python files. Clear boundaries. Well-isolated from the rest of the system.

She ran the stateless worker pattern overnight.

Morning result: 47 Go files. Some with errors. Most compiling.

“The first pass achieved maybe 70% correct migration. The remaining 30% needed human review and Claude-assisted fixes.”

The 70% was the breakthrough. Manual migration of 47 files would have taken two weeks. Claude did it in hours. The remaining fixes took two days.

“Net savings: 10 days of developer time on one module. We had 35 modules to migrate.”

The Feedback Loop

Each module migrated taught the system.

When Claude made consistent mistakes, Priya added rules: “When you see this pattern, don’t translate literally — use this idiomatic Go approach instead.”

When Claude handled something well, she noted it: “This is working. Don’t change it.”

The rules document grew from 5 pages to 45 pages over three months. It encoded the team’s migration knowledge in machine-readable form.

“The rules became the real deliverable. They represented our architectural decisions in a way Claude could execute.”

The Integration Phase

Migrated modules needed to work together.

Priya set up a second class of agents: integrators. These ran after workers completed, checking:

- Do API contracts match between services?

- Do data types align at boundaries?

- Do error codes propagate correctly?

When integrators found mismatches, they generated fix requests that fed back to workers.

“It was like having a QA team that worked overnight. Every morning I’d review the integration report and know exactly what needed attention.”

Month Three: The Hard Parts

Some code defied automated migration.

“The database layer was a nightmare. Raw SQL strings embedded everywhere. No ORM. No pattern consistency.”

These sections required human-Claude collaboration. Priya would work through one file with Claude, establishing the approach, then document it as a new rule for the workers.

“About 15% of the codebase needed hands-on work. The other 85% went through the automated pipeline.”

The Testing Strategy

The original codebase had no tests. The new services needed them.

Priya added a worker class: test generators.

For each migrated file, a test generator Claude instance created unit tests based on:

- Function signatures

- Return type expectations

- Edge cases visible in the code

“The generated tests weren’t perfect. But they caught regressions. That’s all we needed for migration confidence.”

Month Six: The Halfway Point

At the six-month mark:

- 18 of 35 modules migrated

- 340,000 lines of Go replacing 500,000 lines of Python

- Test coverage: 0% → 65%

- Build time: 15 minutes → 3 minutes

“The codebase was getting healthier. Not just translated — improved. The stateless worker pattern forced us to think about every module in isolation, which revealed hidden coupling.”

The Circular Dependency Crisis

Month eight brought a crisis.

Three modules had circular dependencies so tangled that no migration order worked. Module A needed B’s types. B needed C’s interface. C needed A’s implementation.

“These modules had grown together over years, accumulating interdependencies nobody planned.”

Priya created a special “dependency breaker” agent. Its job: analyze circular clusters and propose interface extractions that would break the cycles.

It took two weeks of human-AI collaboration to untangle. But it worked. The three modules became four, with a shared interface package eliminating the circle.

“The AI didn’t solve the architecture problem. It helped me see the problem clearly enough to design a solution.”

Month Twelve: The Home Stretch

Year one ended with 30 of 35 modules complete.

The remaining five were the hardest: payment processing, authentication, user management, audit logging, and the core API router.

“These modules touched everything. Migration meant brief service disruptions. We needed careful timing.”

Priya scheduled the final migrations for low-traffic periods. The stateless workers ran. Integrators verified. Human reviewers approved.

On month fourteen — four months ahead of deadline — the last module went live.

The Results

Final accounting:

- 850,000 lines of Python → 520,000 lines of Go

- 35 modules → 12 microservices

- 0% test coverage → 78%

- Deploy time: 45 minutes → 8 minutes

- Incident rate: reduced 60%

Claude Code costs for the migration: approximately $8,400 over 14 months.

Equivalent consultant cost: estimated $400,000.

“We paid for the migration with what we would have spent on two weeks of consultants.”

The Lessons

Priya documented what she learned:

-

Context limits aren’t blockers — they’re design constraints. Work with small units, not big contexts.

-

Rules beat prompting at scale. Write detailed rules once; apply them to 10,000 files.

-

Fresh instances beat accumulated context for repetitive mechanical tasks.

-

Human-AI collaboration beats pure automation for architectural decisions.

-

The hardest 15% can’t be automated. Plan for it.

The Aftermath

Two years later, the microservices architecture runs smoothly.

New features deploy in days, not months. Onboarding takes weeks instead of quarters. The team grew from 8 to 22 engineers without the codebase becoming unmanageable.

“We slayed the monolith. Not by heroic coding — by systematic decomposition with AI as the labor force.”

The monster is gone. The team that inherited it built something better.