TL;DR

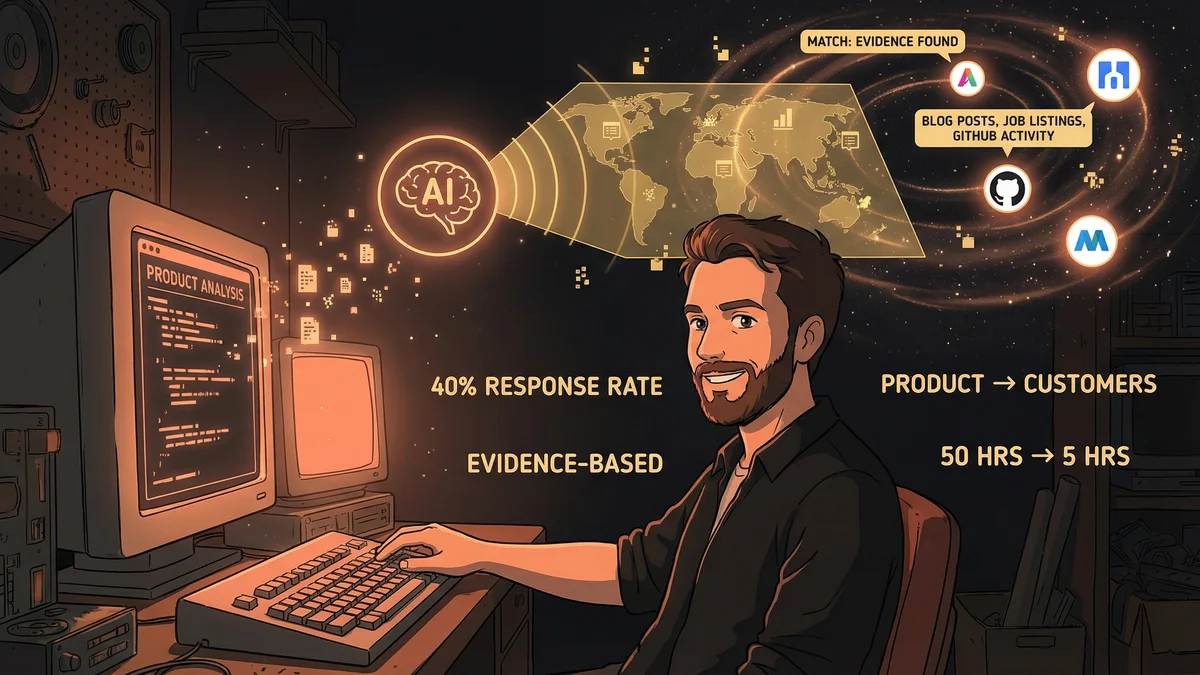

- Achieved 40% response rate on cold outreach by finding companies with evidence of specific pain points

- Reduced lead research from 50 hours to 5 hours for 20 qualified prospects

- Used Claude Code to analyze product codebase, then search for matching customer signals

- Best for: Technical founders who can give AI deep access to their actual product

- Key lesson: AI can find customers showing evidence of needing exactly what you built

A solo founder used Claude Code to analyze his own codebase, then find companies publicly struggling with the exact problem his product solved, achieving a 40% cold outreach response rate.

Nathan had a working product and no idea who to sell it to.

He’d built a developer tool. Something that helped teams manage their AI coding workflows. The product worked. Early users loved it. But finding those early users had been random — friends of friends, lucky Reddit posts, chance encounters.

“I needed systematic lead generation. But I didn’t know where to start. Traditional approaches felt wrong — I couldn’t afford a sales team, and cold outreach to random companies seemed like spam.”

Then he tried something unconventional.

The Experiment

Nathan pointed Claude Code at his own codebase. All of it — the product, the documentation, the landing page copy.

“I wanted Claude to deeply understand what I’d built. Not from a sales pitch, but from the actual code and docs. The real thing.”

Then he asked a question that felt almost too simple:

“Based on everything you now know about this product, identify the top 5 companies in my area that would be the best pilot customers. Explain your reasoning.”

The Analysis

Claude didn’t just search for “companies that use developer tools.”

It analyzed the product’s architecture. The problems it solved. The workflow it assumed. The pain points it addressed.

“Claude understood that my tool was specifically useful for teams doing a certain kind of AI-assisted development. That narrowed the target dramatically.”

Then Claude searched. Company websites. Tech blogs. LinkedIn engineering posts. GitHub activity.

“It found companies that were publicly struggling with the exact problem I solved. Blog posts about ‘managing Claude outputs’ or ‘keeping track of AI-generated code.’ These weren’t guesses — they were signals.”

The Results

Claude produced a list of five companies. Each entry included:

- Company name and why they matched

- Evidence of the pain point (links to their blog posts or job listings mentioning relevant challenges)

- The likely decision maker (based on org charts and LinkedIn)

- A suggested approach angle

“This wasn’t generic. One entry said, ‘Their VP of Engineering wrote a blog post three months ago about difficulties tracking AI-assisted PRs — your product directly addresses this.’ That’s not something I’d find with basic Google searches.”

The Outreach

Nathan contacted all five companies.

“I didn’t send template pitches. I referenced the specific problems each company had publicly discussed. ‘I saw your post about X. We built something that might help.’”

Three responded. Two became pilot customers.

“A 40% response rate. From cold outreach. That’s unheard of. The difference was relevance — I wasn’t guessing they might have a problem, I knew they did because Claude found the evidence.”

The Scalable Process

One successful experiment became a repeatable system.

Nathan established a weekly rhythm: ask Claude to find five more potential customers based on fresh signals. New blog posts. Recent job listings. Conference talk topics.

“Claude became my lead researcher. I’d spend Monday mornings reviewing its findings, then reach out to the most promising ones. Maybe two hours of work that would have taken days manually.”

The quality stayed high because the research was deep. Not “companies in my industry” but “companies showing specific indicators of the exact problem I solve.”

The Competitive Analysis Extension

Nathan pushed further. If Claude could find customers, could it analyze competitors?

“I asked Claude to find companies building similar products and analyze how I could differentiate. Not surface-level stuff — actual technical comparisons based on their public code and docs.”

Claude identified four competitors Nathan hadn’t known about. For each, it analyzed:

- Technical approach (from GitHub repos and documentation)

- Pricing model (from public pages)

- Target customer (from marketing copy and testimonials)

- Gaps Nathan’s product could exploit

“One competitor focused on large enterprises. Another on individual developers. Nobody was serving the mid-size startup segment. That became my positioning.”

The Integration Intelligence

Claude’s product understanding enabled something else: integration opportunities.

“I asked which tools my ideal customers were likely already using. Not guessing — Claude analyzed the tech stacks of companies matching my customer profile.”

The analysis revealed that most potential customers used three specific tools that Nathan’s product didn’t integrate with.

“Building those integrations became the roadmap. Not based on what I thought mattered, but on what the data showed about actual customer workflows.”

The Limitation Recognition

Nathan was clear about boundaries.

“Claude could find signals. It couldn’t verify them. One company that looked perfect from public data turned out to have already solved the problem internally. The signals were real, the conclusion was wrong.”

Lead research still required human judgment. Verification. Conversations that revealed what public data couldn’t.

“Claude improved the hit rate dramatically, but it didn’t eliminate misses. I still had to do the relationship-building part myself.”

The Founder’s Advantage

Nathan realized this approach worked particularly well for founders with technical products.

“I could point Claude at the actual code. It understood the product at a level no sales AI could. That depth enabled the precision targeting.”

For non-technical products, the approach would need adaptation. Different signals. Different analysis methods.

“But the core principle — use AI to deeply understand what you’re selling, then find people showing evidence of the exact problem — that generalizes.”

The Time Mathematics

Nathan quantified the impact.

“Traditional lead research for my market: maybe 50 hours to find 20 qualified prospects. With Claude: about 5 hours for the same 20, with higher qualification rates.”

The savings compounded. More time for product development. More time for customer conversations. Less time in research rabbit holes.

“I’m a solo founder. Every hour matters. Claude shifted dozens of hours from research to action.”

The Advice

For other founders considering this approach:

“Give Claude real access to your product. Not marketing copy — actual code, docs, internal knowledge. The depth of understanding determines the quality of targeting.”

Start specific. Don’t ask for “customers” — ask for customers matching specific criteria based on specific evidence types.

“And verify. Claude will find patterns, but patterns need validation. Use the AI for discovery, use yourself for judgment.”

The Current State

A year later, Nathan’s company has paying customers across three continents.

Most came through the same process: Claude finding signals, Nathan making contact, relationships building from there.

“I still run the lead discovery every week. The product has evolved, so the customer profile has evolved. Claude adapts because it’s always analyzing the current product, not a static description.”

The tool that helped developers manage AI was marketed by AI managing developer outreach. The symmetry wasn’t lost on him.

“AI finding customers for AI products. There’s probably a lesson there about the future of B2B sales.”