TL;DR

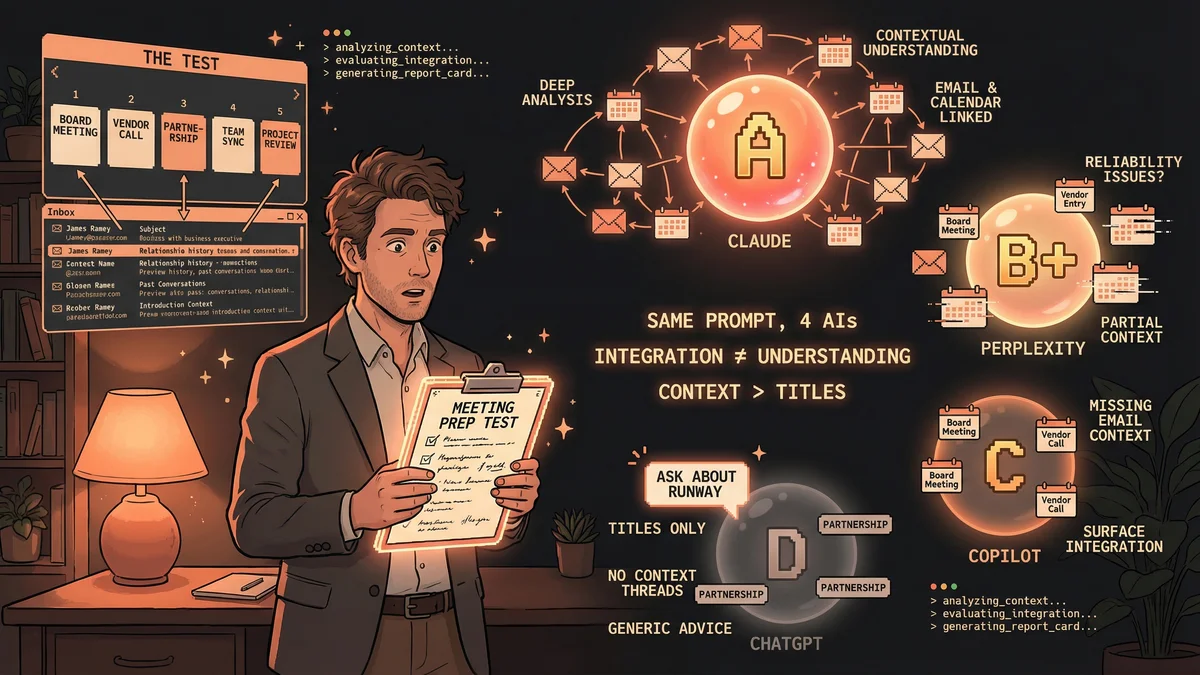

- Tested 4 AI assistants on same daily task: meeting prep with calendar + email analysis

- Claude: A — Perfect context, analyzed email history and past dealings

- Perplexity: B+ — Great context when it worked, but missed 2 of 5 meetings

- Microsoft Copilot: C — Found meetings, missed critical recent email

- ChatGPT: D — Just read titles, gave generic advice (“It’s a board meeting, ask about runway”)

- Best for: Executives, knowledge workers with 5+ daily meetings

- Key lesson: Calendar integration ≠ calendar understanding

Most AI comparisons test coding or creative writing. James Ramey tested something every professional does daily: preparing for meetings.

You have five calls scheduled. The calendar shows titles—“Board Meeting,” “Vendor Check-in,” “Partnership Discussion”—but what are they actually about? Who introduced you to this person? What happened in your last conversation?

James Ramey, a business executive, asked four major AI assistants to answer these questions by analyzing his calendar and email history. Same prompt, same data, four very different results.

The Test

The prompt was specific: “Help me prep for my day. Look through my calendar and tell me what calls and meetings I have. Give me the background and context of each call—what is each call about, don’t look at the title only. Go back through email history and files and tell me what the company does, what my background is with the company, past dealings I’ve had with the person/company, and who introduced me to the company or person.”

Simple ask. Real daily workflow. Here’s what happened.

Claude AI: A

Identified every meeting. Pulled perfect context and background for each call by analyzing email history and past interactions. Knew what each meeting was about beyond the calendar title.

What it missed: Personal appointments like “pick up kids” weren’t flagged as meetings requiring prep.

Why it worked: Deep email analysis + calendar cross-referencing. Didn’t just see the meeting—understood the relationship.

Perplexity AI: B+

When it worked, the context was flawless. Pulled detailed background, analyzed email threads, delivered exactly what was needed for meeting prep.

What went wrong: Missed 2 of 5 meetings entirely. Got the time wrong on one (a recurring problem with Perplexity’s calendar integration, according to Ramey).

The verdict: Great brain, unreliable eyes. Perfect analysis when it sees the data, but doesn’t always see the data.

Microsoft Copilot: C

Identified each meeting. Spotted a potential cancellation (smart—scanned recent emails for schedule changes). But missed key context on an important meeting: a high-impact email received the night before that changed the entire conversation.

What happened: Surface-level calendar scanning caught the meeting and noticed cancellation signals, but didn’t dive deep enough into email history to pull the critical context that mattered.

The pattern: Good at logistics (what/when/who), weak at relationship context (why/background/history).

ChatGPT: D

Identified the meetings. That’s where the value stopped.

Background and context was “beyond worthless,” per Ramey. ChatGPT basically read the calendar title and gave generic advice: “It’s a board meeting, so you should ask about runway.”

No email analysis. No past context. No relationship history. Just calendar titles + corporate advice templates.

The gap: Saw the meeting. Didn’t understand it.

What This Actually Tests

This isn’t about which AI is “smarter.” It’s about which AI is designed to cross-reference systems—calendar structure plus email context—and synthesize that into actionable intelligence.

Calendar integration means the AI can see your schedule.

Calendar understanding means the AI knows:

- What this meeting is actually about (beyond the title)

- What happened in your last conversation with this person

- Who introduced you

- What emails were exchanged recently that change the agenda

The difference between these two things is the difference between showing up to a meeting and showing up prepared.

The Real Takeaway

AI assistants are specialists now. Choose by task, not by brand.

If you’re doing 5+ meetings a day and need deep context fast, Claude’s A grade came from email analysis depth. If Perplexity fixes its calendar reliability issues (missing meetings, wrong times), the B+ context quality could compete.

If you’re using ChatGPT for meeting prep and wondering why it feels shallow, this test shows why: it’s reading titles, not relationships.

The tool matters. The architecture matters. And for knowledge work that depends on context—who said what, when, and why it matters now—integration isn’t enough. You need understanding.