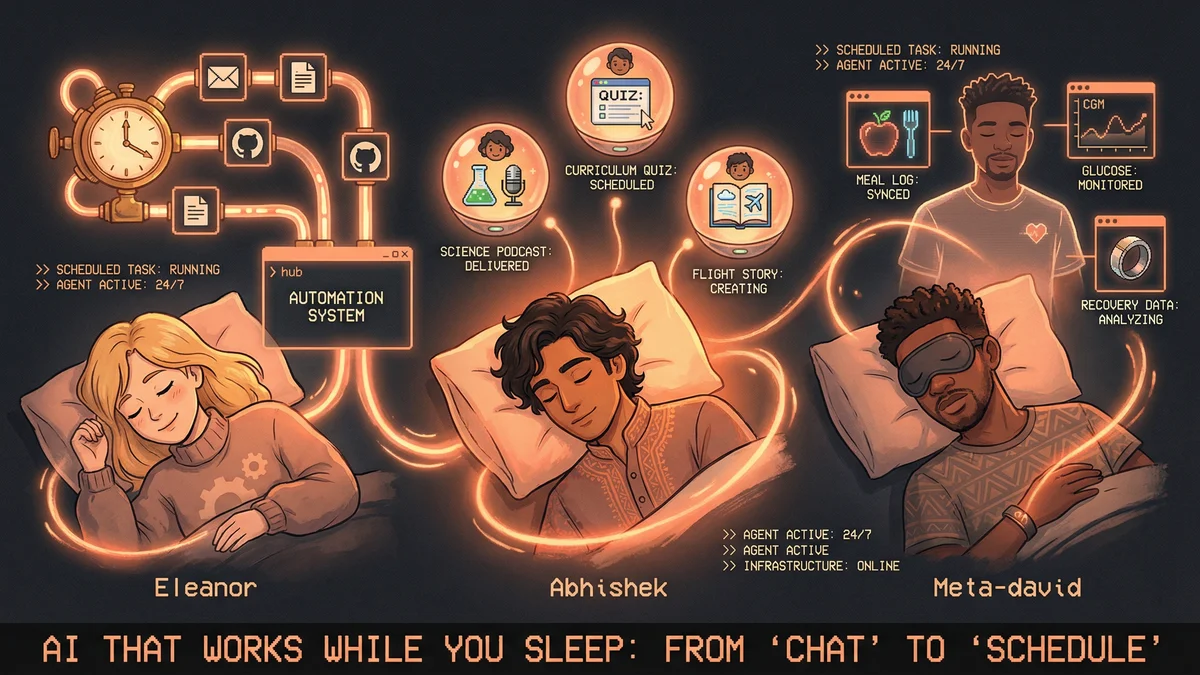

TL;DR

- Eleanor Berger replaced Zapier with natural language automation that “runs my life and business”

- Abhishek built parenting agents that deliver science podcasts and flight-tracking bedtime stories

- Meta-david’s health agent logs meals, maps glucose spikes, and delivers daily recommendations by morning

- Best for: Anyone tired of prompting AI repeatedly for the same tasks

- Key lesson: The shift from “chat” to “schedule” is when AI becomes useful infrastructure

Most people treat AI like a search engine. A few started treating it like a payroll.

The difference isn’t better prompts or fancier models. It’s scheduling work to happen autonomously while you sleep, commute, or spend time with your kids. No input boxes. No waiting for responses. Just results when you need them.

Here are three people who made that shift - in business operations, parenting, and health management.

Eleanor Berger: “It Runs My Life and Business”

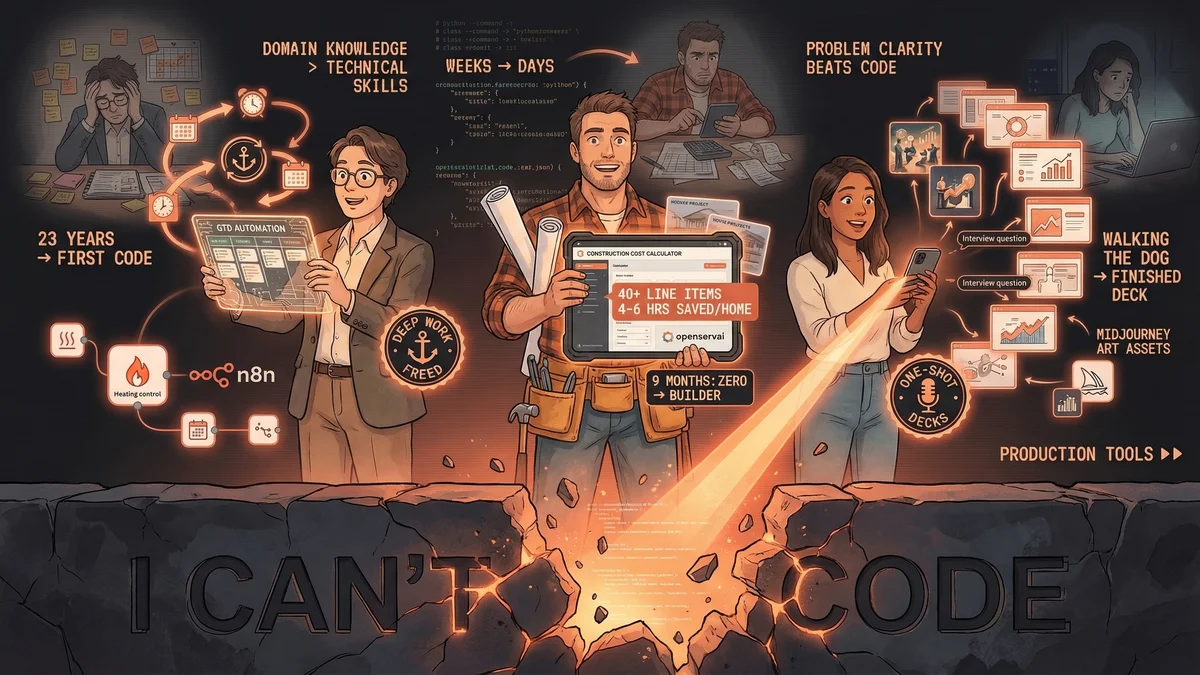

Eleanor Berger got tired of “coding with icons” in tools like Zapier and n8n. Every new automation meant dragging boxes, connecting nodes, debugging visual workflows. So she built something simpler: she tells Claude Code what automation she needs, and it creates and installs it.

The result? Pipelines between emails, tasks, GitHub, docs, and accounting - anything that doesn’t require her direct involvement. She describes it as a complete automation system that “basically runs my life and my business now.”

The automations themselves aren’t agentic or “smart” - they’re boring plumbing. Email arrives, data moves, tasks update. What changed is how she builds them: plain English instead of configuration menus. Need a new pipeline? Describe it once, never touch it again.

She also built custom APIs for SaaS apps that don’t offer APIs, using direct HTTP calls to liberate data from UI-only tools. No browser automation (too slow and unreliable). Just direct calls with parameters that look like normal user interaction.

Her Anki flashcard creation skill is public on GitHub if you want to see the approach in action.

The shift: From building workflows manually to describing what you need once.

Abhishek: Science Podcasts and Flight-Tracking Bedtime Stories

Abhishek built three AI agents for his kids - and none of them need access to personal data.

The Daily Science Podcast: Every morning, an agent scrapes kids’ science news sites, picks the top 3 stories based on both kids’ interests, and reframes them in age-appropriate, silly language. The result: three 25-second voice podcasts the kids “really enjoy listening to.”

The Curriculum Quiz Generator: The agent has access to public curriculum data. It generates fun questions to test knowledge and explains concepts interactively. Educational testing without the teacher workload.

“Dada’s Journey” - Flight Tracking Storybook: When Abhishek travels, an agent monitors his flight location in real-time. It sends the kids updates about which states the plane is flying over and creates a bedtime storybook from the journey. Emotional connection built from public flight data.

All three agents work with publicly available information - no surveillance-level family data required. The value isn’t data access, it’s creative transformation: news sites become podcasts, curriculum becomes quizzes, flight paths become stories.

Hiten Shah called them “adorable.” Karina Q agreed. But the real test: the kids actually listen.

The shift: From manually creating content to waking up with it already prepared.

Meta-david: “From Tracking to Delegating”

Meta-david’s health agent works 24/7 while he sleeps or spends time with his kids.

The workflow:

- Text a photo or description of a meal → agent logs macros automatically

- Macros sync to Apple Health before the meal is finished

- Continuous glucose monitor (CGM) catches glucose movement

- Agent maps spikes back to specific meals and identifies what caused it

- Overnight: agent pulls Oura recovery data and reviews tomorrow’s schedule

- By morning: personalized food and activity recommendations waiting in his inbox

He describes it as shifting “from tracking to delegating.” Most health apps are passive dashboards - you log data, maybe glance at charts. This agent does the analysis work overnight and surfaces actionable insights before the day starts.

No manual data entry. No reviewing glucose graphs. No cross-referencing meals with metrics. Just: “Why did my glucose spike?” answered with “The rice in your 3pm bowl - try quinoa instead.”

The agent uses his CGM, Oura Ring, and Apple Health as data sources. The integration isn’t just collection - it’s causal mapping. Glucose spike → exact food → specific adjustment.

The shift: From reviewing dashboards to receiving recommendations before you ask.

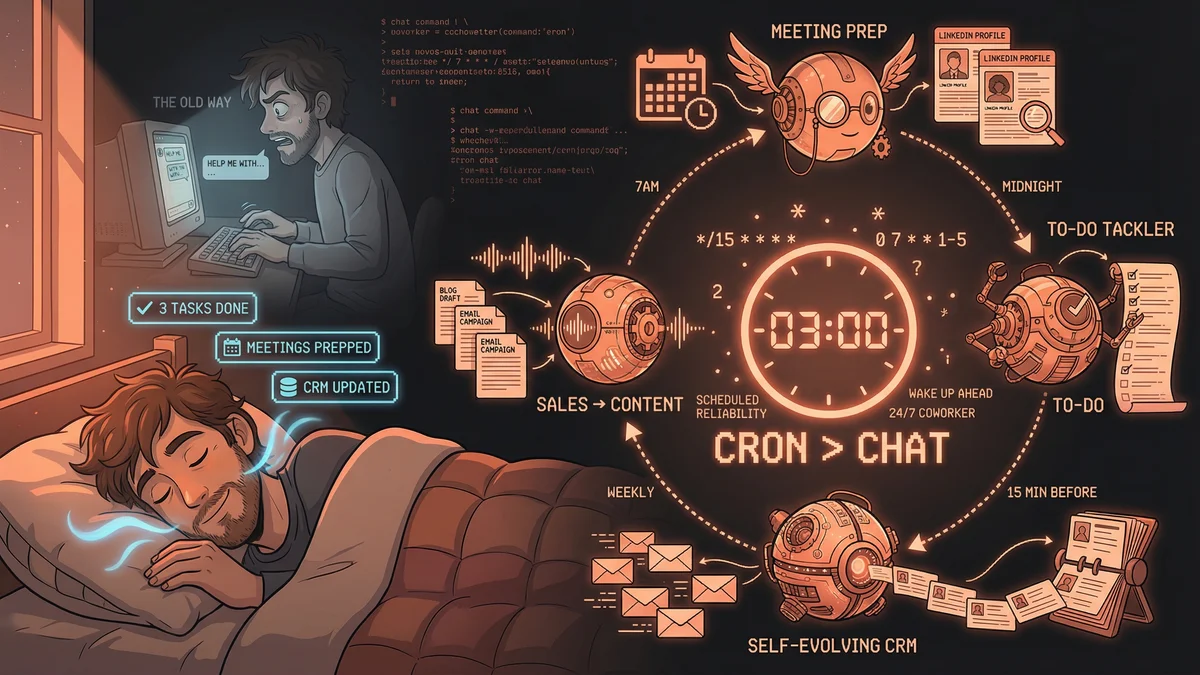

The Pattern: Schedules Over Prompts

All three share the same unlock: they stopped asking AI for things and started scheduling it to do work autonomously.

Eleanor doesn’t open Zapier to build a workflow. She describes it once and moves on.

Abhishek doesn’t prompt AI to write bedtime stories every night. The flight-tracking agent does it automatically.

Meta-david doesn’t ask “What should I eat tomorrow?” The agent already analyzed his recovery data and calendar overnight.

The value isn’t intelligence. It’s reliability. Agents are “infinitely consistent” - they don’t forget, get distracted, or decide tonight’s too busy for meal logging. They just run.

The real shift: Most people are still learning better prompts. A few realized the goal isn’t better conversations - it’s removing the conversation entirely.